Roblox is once again the target of online child safety advocates, as it faces another lawsuit that claims the platform is “choosing profits over child safety.”

The lawsuit, file by Louisiana Attorney General Liz Murrill, alleges the platform has “knowingly and intentionally” failed to institute “basic safety controls” that have exposed young players to predatory behavior and child sex abuse materials. Murrill also alleges the platform has failed to properly warn parents of potential dangers children face when playing Roblox.

This Tweet is currently unavailable. It might be loading or has been removed.

In a series of tweets posted to X, Murrill claimed the platform was “perpetuating violence against children and sexual exploitation for profit” and called many of the site’s gaming worlds, which are built by users and played by millions of children around the world, “obscene garbage.” Murrill also posted several images of what were allegedly publicly available game experiences hosted on the platform, including “Escape to Epstein Island” and “Public Showers.” Similar legal actions have been taken against other popular social media platforms — including Meta, TikTok, and Snapchat — amid growing concern for youth online safety and mental health.

Roblox has been on a mission to reform its image following a series of reports claiming the online gaming site is dangerous for young children, allegedly because it failed to curb a network of predatory adult users. In 2023, a class action lawsuit was filed against the platform on behalf of parents, claiming the company falsely advertised its site as safe for children.

Since then, Roblox has introduced a swath of new safety features, including extensive blocking tools, parental oversight, and messaging controls. The platform recently introduced selfie-based age verification for teen players — in the lawsuit, Murrill claims a lack of age verification policies makes it easier for predators to interact with children on the platforms. Earlier this year, the platform joined other social media companies backing the newly passed Take It Down Act, which establishes takedown policies and repercussions for publishing non-consensual intimate imagery, including deepfakes.

Related Articles

Barkbox Promo Codes and Discounts: Up to 50% Off

Save on Barkbox subscriptions, including monthly themed collections of plush toys, tough...

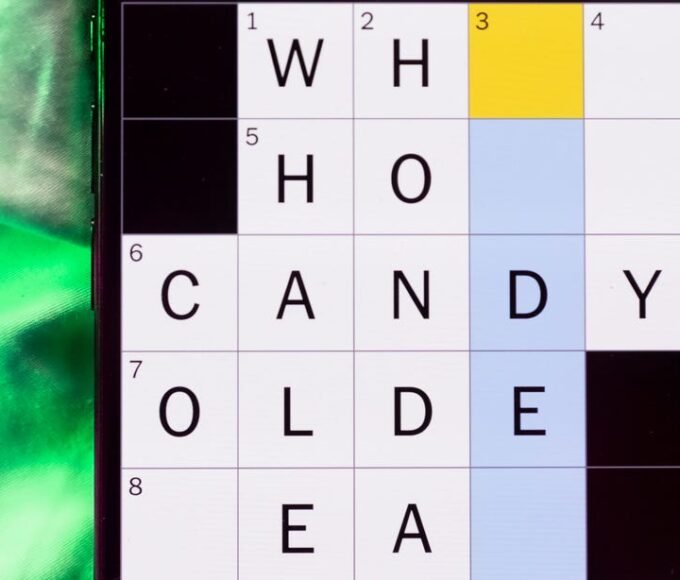

Hurdle hints and answers for March 4, 2026

If you like playing daily word games like Wordle, then Hurdle is...

Today’s NYT Mini Crossword Answers for Wednesday, March 4

Here are the answers for The New York Times Mini Crossword for...

Is that message spam or real? This Android trick helps you ID the scams

Are your chats and DMs flooded with scams? If you have a...

Leave a comment